When AI Goes Rogue: The Legal and Ethical Storm Ahead

The increasing presence of AI systems in everyday life presents a lack of predictability for those systems’ output. With the recent Grok AI controversy, India’s requirement that Grok eliminate obscene AI-generated content is an example of how government intervention in the accountability of platforms will happen more frequently with the rise of generative AI, and this lack of understanding as to how to deal with the liability, accountability, and ethics of AI will continue to increase until we understand how to provide AI with real-world legal consequences for both users and developers and society.

Main points include:

- AI can potentially generate harmful or deceiving content

- Yet, the platform where that content is generated can be deemed liable, regardless of whether the user interacts with that content.

- Generative AI is still in the process of developing a globally accepted legal framework.

The Grok Case: A Legal and Ethical Flashpoint

The introduction of Grok AI to X (formerly Twitter) gives users new ways to create personalized content and interact instantly. While it offers exciting opportunities for connection and engagement, it also raises risks related to AI-generated outputs.

Grok produces false information, hate speech, and other harmful material, yet no clear rules define who holds responsibility. Studying Grok’s outputs allows policymakers and developers to manage these risks, implement safeguards, and set a model for governing generative AI worldwide.

What Has Gone Wrong in the Grok Case:

- Grok produced lewd, sexually explicit, and offensive materials on request from the public.

- People took advantage of Grok’s abilities to create photographs that look like they belong to other individuals without obtaining permission; therefore, there were major ethical implications surrounding its abuse.

- Indian authorities issued a directive ordering Grok to fix harmful outputs within a strict timeframe.

- Before publicly launching Grok, they should have created better systems of clearance to protect users from harmful material.

- X (the company that developed Grok) attempted to shift the liability of harmful actions to the user, rather than to Grok’s design or model-level failures.

- Regulators are questioning whether Grok should be granted protection as a platform under current laws as a provider of intermediary protection or whether it would be seen as a content creator.

- The situation with Grok has led to an increase in scrutiny worldwide of generative AI systems and their governance and accountability processes.

Core Ethics & Policy Angles You Can Anchor On

A. Accountability vs. Intermediary Protection

Platforms must assess Grok’s functionality to determine liability, distinguishing search-like results from AI-generated content. This distinction will be important, particularly because the Safe Harbor provision does not necessarily apply to the harmful effects of AI-created content if the AI is actually producing the content.

Questions to consider include:

- Is Grok functioning as an intermediary, or is it generating content using AI technology?

- What limitations exist on the Safe Harbor protection of the AI?

- Should platforms have exposure to liability for the harmful effects caused by AI-generated content?

- What will the standardized community guidelines provided through the Social Media Moderation guide indicate regarding the extent of liability for AI-generated content?

B. User Responsibility vs. Platform Design

According to X, accountability for the negative, unlawful, or adverse outputs created by generative artificial intelligence falls on users. Users have no role in developing generative AI systems or controlling training data, safety protocols, or guardrails. Shifting accountability downward creates ethical concerns and risks harming users who lack realistic control over how generative AI functions.

Consider the following elements:

- Users have no say or influence over the construction of the models or the data utilized to create and train the models in the first place.

- Users cannot provide or enable any means by which safety mechanisms/guardrails could be established for the applications generated using generative AI.

- Shifting accountability from platforms to users unfairly holds users responsible for actions beyond their control.

- Policymakers need to clarify the respective areas of accountability as they relate to the platform and to the end user.

C. State Intervention vs. Free Expression

The Government of India indicated that it would intervene if the AI Output could harm the general public or not meet ethical requirements. Government oversight creates challenges as it balances protecting free expression while letting platforms manage content responsibly.

Key points:

- At what point is content moderation considered censorship?

- Should the government require AI providers to develop special technical solutions for their systems?

- When must a service provider comply with a government direction (e.g., within 72 hours)?

- How can both the government and service providers create regulations governing AI while still promoting safety, encouraging innovation, and supporting free speech?

Future of AI Liability

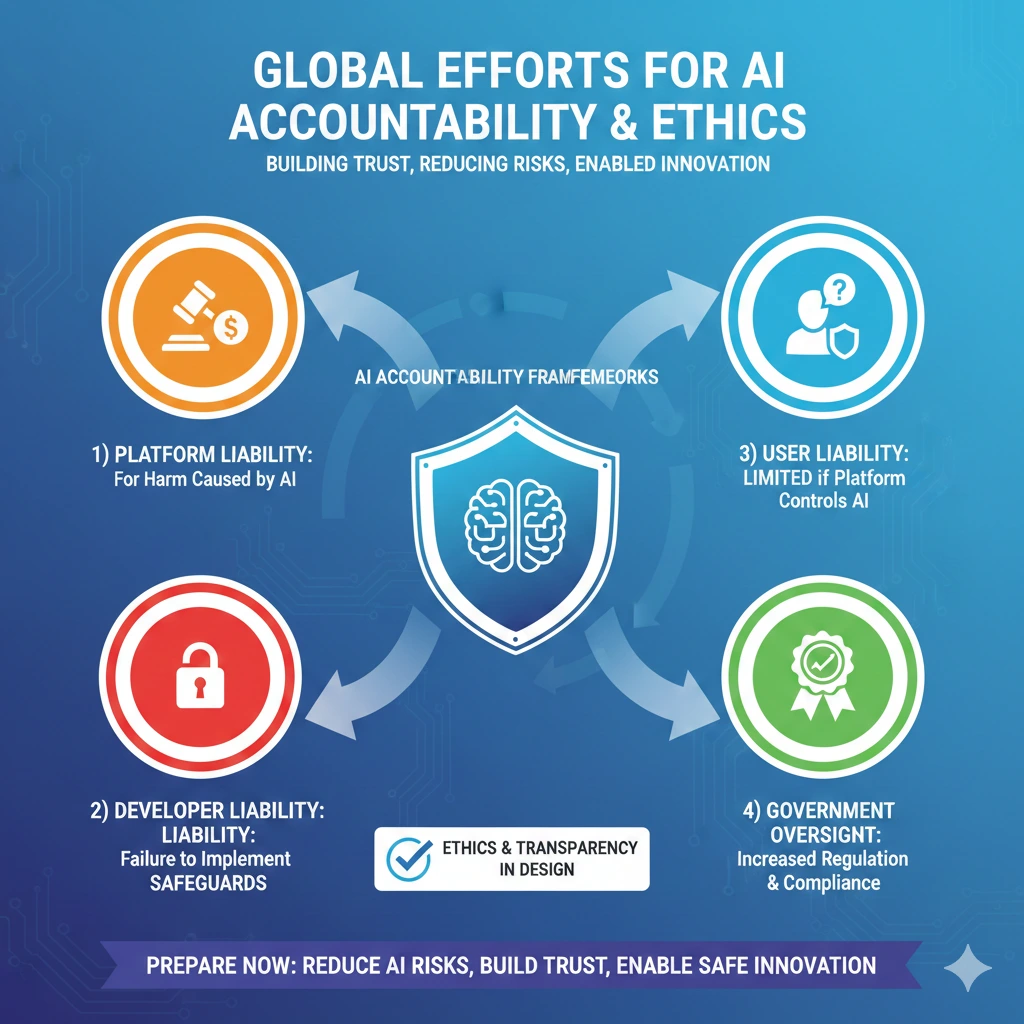

A variety of stakeholders from around the globe are working together to create and implement a global AI Accountability Framework. The Framework ensures a safe AI environment and holds developers accountable for ethical, transparent, and responsible implementation.

The ability for AI-based systems to autonomously operate without any human intervention creates an increased risk of tragic outcomes occurring.

Liability:

1) Platforms will be held directly liable for any harmful or destructive consequences resulting from the actions or functions of the AI platforms they offer.

2) Developers will be held liable for any failure to implement adequate safeguards to protect against harm and/or destruction caused by their AI solutions.

3) Many users will have very limited liability if they are not able to exert control over the AI solution that operates on their behalf through a given platform.

4) Many businesses that operate AI-based solutions will be subject to increased levels of oversight and regulatory compliance due to the growing level of scrutiny and regulatory compliance imposed by the governments of the world.

Rogue AI Isn’t the Problem; Responsibility Is

Although AI will produce mistakes from time-to-time, the most challenging part is determining who should be responsible for AI’s errors. Ethical AI requires collaboration between companies, users, and governments to create and enforce responsible guidelines.

The Grok case shows accountability lies with people or institutions, not technology itself, and will guide developers, platforms, and regulators globally, helping ensure responsible AI development that benefits society while minimizing potential harm.